Multimedia Understanding

meets Social Media Analysis

Project Goal

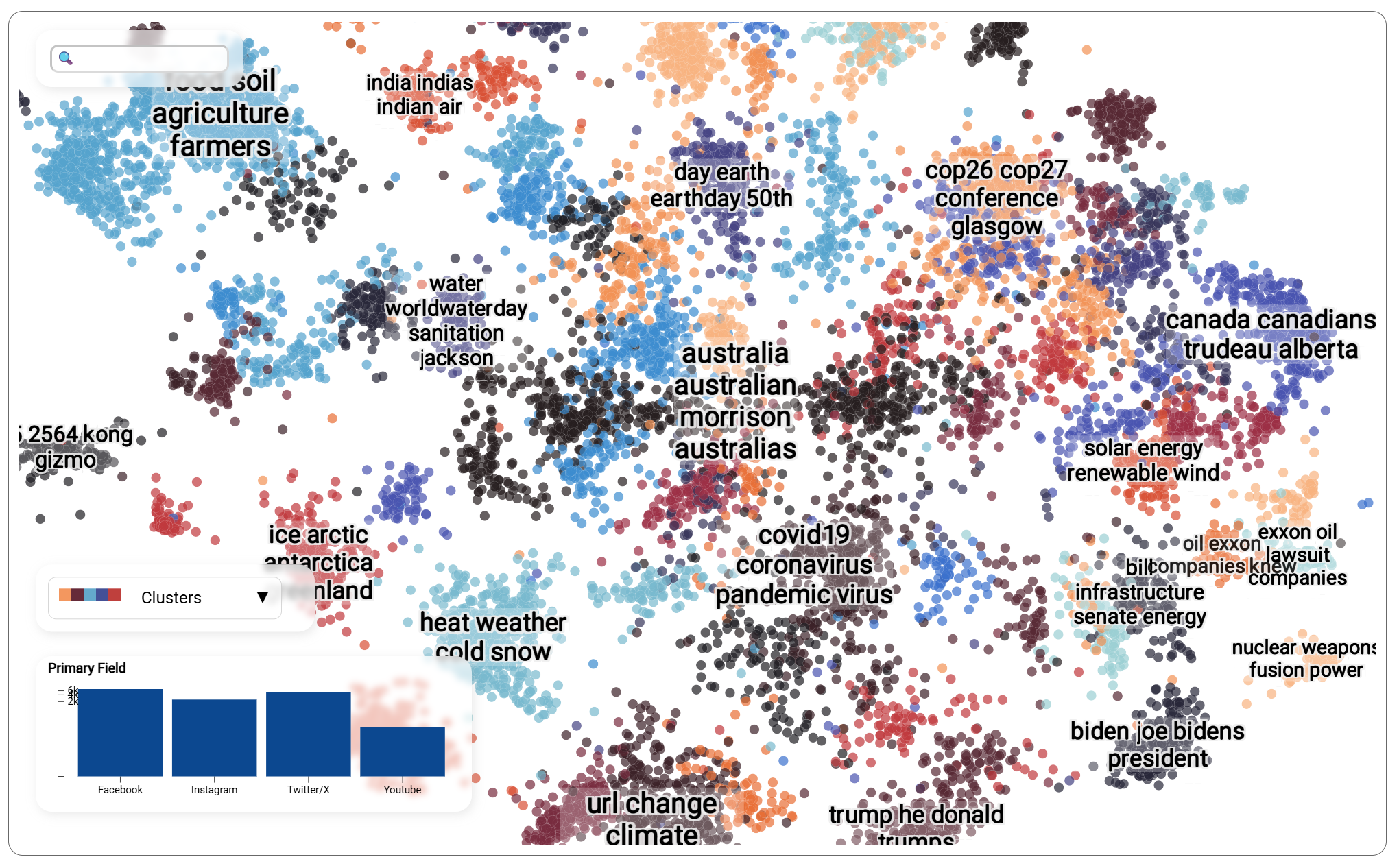

Social media is no longer just words. Images, videos, networks, and even virtual worlds shape how information spreads and how opinions form.

MUSMA develops next-generation AI models that jointly analyse language, visuals, and social interactions to understand online behaviour at scale. By bridging Network Science, NLP, and Computer Vision, the project enables:

- tracking how conversations and narratives evolve over time;

- detecting misinformation, coordinated manipulation, and emerging communities;

- analysing how users consume, trust, and amplify information.

MUSMA goes beyond traditional social platforms, extending its methods to metaverse environments: immersive, interactive spaces that represent the future of online social interaction. The result is a unified framework for studying complex, multimedia digital ecosystems.

The analyses presented on this website showcase selected outputs of the MUSMA monitoring framework. Due to platform-specific data access policies, results are obtained from different datasets and scenarios, each chosen to illustrate specific components of the pipeline. Together, they demonstrate how MUSMA methods can be applied to monitor online conversations, multimedia content, and information flows in realistic settings.

Research Units

University of Udine

University of Modena and Reggio Emilia

University of Rome “La Sapienza”